Modernizing with Google BigQuery: How Migrations to Cloud Data Warehouses Have Evolved

Last Published: Sep 27, 2022 |

Knowing your customer is the best and easiest way to better serve their needs. And the customer data already available within the enterprise can provide the exact insights needed to maximize that customer experience. The challenge, however, has been to get your arms around that data and make it easier to digest for analysis. Cloud data warehouses like Google BigQuery can help you overcome that challenge by offering improved access to data while leveraging the benefits of cloud and cloud elasticity.

Thanks to Google’s recent acquisition of big-data analytics firm Looker—and to the performance and functionality enhancements Google adds to BigQuery seemingly every day—the adoption of BigQuery is accelerating, with a rapidly growing list of who’s who customers. And with the latest release of Informatica Intelligent Cloud Services (IICS) on the Google Cloud Platform and increasing support for advanced BigQuery functionality, including push down optimization and change data capture, Informatica is well-positioned to partner with Google to get customers onto BigQuery quickly and successfully.

The Evolution of Cloud Migration

I recently found myself responding to a question about how migrations to a cloud data warehouse today differ from migrating database platforms back in the “good ol’ on-prem days.” My answer was simple—migrations to a new cloud database platform don’t occur over a weekend, but rather evolve over time.

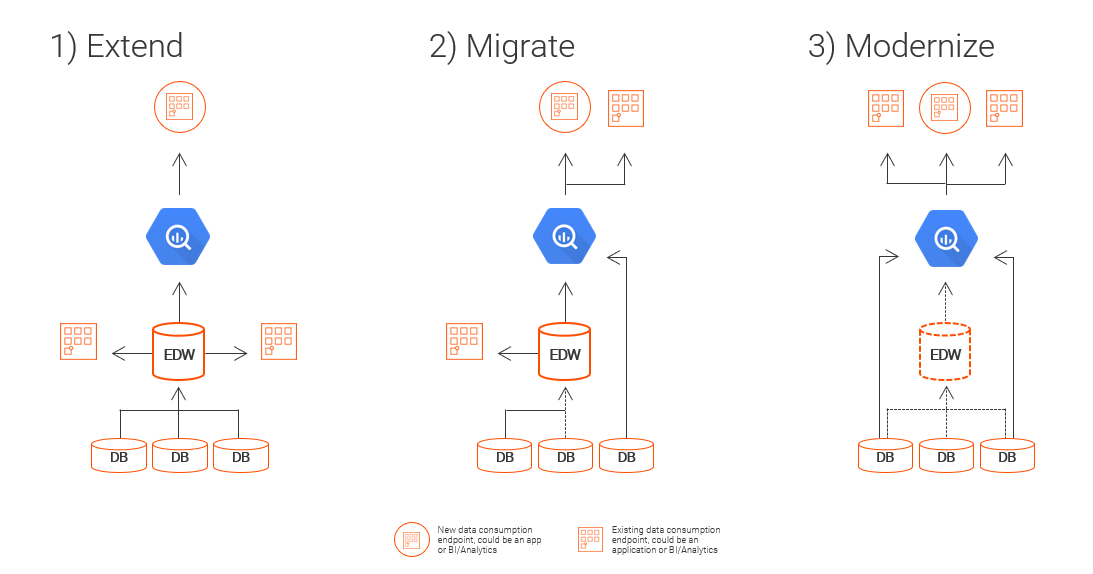

In fact, we’ve seen this evolution occur in three major phases. (Often, an enterprise will have different line-of-business projects at different points in the evolution at the same time.) These phases are:

Let’s consider a baseline scenario: An enterprise customer has been a longtime user of Netezza and now, faced with its upcoming end of life, has chosen BigQuery as the foundation of their future data warehouse and analytics platform. Let’s consider their journey.

Extend: Deploy New Analytics Use Cases to BigQuery

When an IT organization is presented with a new use case to support, a great way to begin the journey is to deploy the new use case in the cloud. Continue to source data into the on-premises Netezza enterprise data warehouse (EDW) but replicate the data pipeline to feed BigQuery. Build out the new use cases against BigQuery so that any new development is against the future state, not the legacy environment.

Extension has the added benefit of allowing the IT organization to be responsive to the business, while reducing investment in the legacy infrastructure and shifting investment to the future state. Further, it begins to provide the IT organization with practical and operational experience with BigQuery, which will make the next two phases more efficient and successful.

In fact, during this phase, the IT team has an opportunity to modernize the implementation pattern as well. For example, extract-load-transfer (ELT) patterns—where a customer loads raw data into the cloud data warehouse (CDW) and leverages the power of the exabyte-scale CDW engine to perform transformation—tend to be preferred. For that reason, this is the perfect time to convert from an ETL model (which is likely driving their current EDW) to an ELT model with BigQuery.

While the actual process is of course more involved, from a conceptual perspective, customers will start by seeding their BigQuery environment with an initial data load from the on-premises Netezza environment. They will then implement a continual update strategy to ensure that BigQuery is always up to date to support the new use case.

Once these two high-level steps have been completed, the new use case is ready to be developed against BigQuery. The first step of the journey has been taken!

Migrate: Move Strategic Workloads to BigQuery

Once a foundation has been established with BigQuery during the extend phase, it will be important for an IT organization to perform an inventory of the workloads that depend on the Netezza data warehouse. Further, it involves more than simply taking inventory of the use cases dependent on Netezza. The IT team needs to build a detailed data flow map that identifies the paths of all source data (lineage) and how the data flows to downstream applications (impact analysis). Understanding how the data flows by individual use case will enable the IT team performing the migration to do so with the greatest levels of use case data and with all dependencies mapped out.

With this level of visibility, the IT organization can move the workloads confidently to BigQuery, knowing that they have prepared and planned the move so that all downstream and upstream dependencies will function properly as they connect to BigQuery. This will ensure a smooth transition and minimal downtime, as existing analytics and applications dependent on Netezza are now redirected to BigQuery.

Modernize: Leverage the Benefits of BigQuery

Once the center of an enterprise’s data gravity has shifted to BigQuery and strategic workloads, analytics, and applications have been connected to BigQuery, the IT organization now has the opportunity to evaluate the unique strengths of the platform. They can begin to develop a plan to modernize their applications to take the best advantage of BigQuery.

For example, with BigQuery’s RECORD data type that collocates master and detail information in the same table, customers can load nested data structures (e.g., a JSON file from a REST web service) to a single BigQuery table while continuing to use SQL. This provides tremendous compatibility with countless data tools and technologies while benefiting from the extreme performance improvements of BigQuery’s columnar technology.

Another modernization opportunity, for example, would be to adopt the built-in machine learning (ML) engine of BigQuery. In fact, after the initial work of building the ML model has been completed, the integration patterns should consider the ML model and keep the training of the ML model up to date as part of the production workloads. This ensures that the ML output is based on the most recent data.

Every enterprise’s journey will be different, but the “extend, migrate, modernize” approach ensures responsiveness to the business from the very beginning, while focusing on maintaining business continuity and taking advantage of the BigQuery platform.

With Informatica, you can seamlessly automate integration and management of your data across Google Cloud, SaaS, and on-premises systems to unleash the power of data. Informatica also features support for many of the advanced use cases discussed above, such as push down optimization to drive your ELT integration patterns, change data capture to ensure your BigQuery instance stays up to date in near real-time, the RECORD datatype, operationalizing the BigQuery ML engine, and more.

Next Steps

Accelerate your Google Cloud analytics modernization with Informatica. Get more value from your data and boost your data-driven digital transformation. Informatica Intelligent Cloud Services are now available on the Google Marketplace.