Master the Art of Data Science With Self-Service Data Preparation

Data preparation is what every AI and machine learning project needs right now. Roughly speaking, data preparation involves cleansing and transforming data before it’s used in analytics. To explain why it’s so important, let’s take a step back to an ancient board game.

Go is a popular board game with origins in Asia. The aim in Go is to surround more territory than your opponent can. Despite its relatively simple rules, Go is very complex, and it was believed that a machine could never beat a human in this game. That was until a company named DeepMind Technologies developed a computer program called AlphaGo that started beating human professional Go players.

AlphaGo uses algorithms to find its moves based on knowledge previously "learned" by machine learning. Specifically, this knowledge is acquired by an artificial neural network (a deep learning method) informed by extensive training from both human and computer play. But the most important reason AlphaGo can beat humans in this game is because researchers used the right and accurate datasets to train the machine learning models. As with AlphaGo and the game of Go, enterprises also need the right data to drive their AI/ML and data science initiatives.

The Biggest Challenge in Accelerating Data Science Projects? It’s Data Preparation.

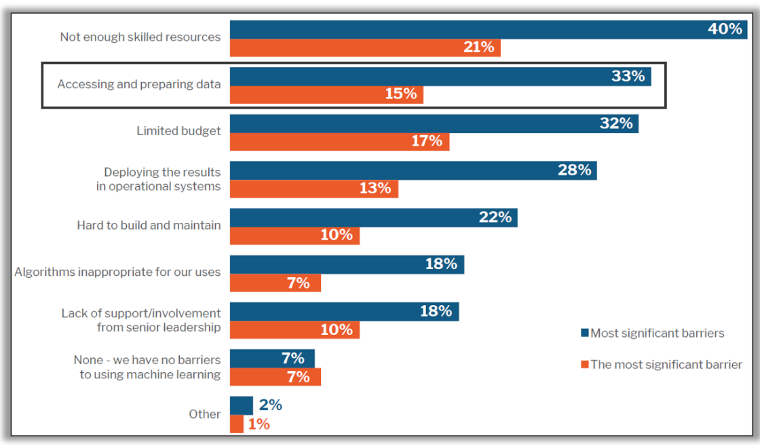

According to a 451 research survey of people directly involved in AI and ML initiatives, 33% of respondents cited accessing and preparing data as a barrier for the use of machine learning.

Most of the analysts found out in their research that the biggest challenge in accelerating data science projects is that most of the data scientists spend 80% of their time cleaning and preparing the data and 20% of their time on analysis. In fact, there is common joke among data scientists that they spend 80% of their time cleaning the data and the remaining 20% of their time on complaining about cleaning the data.

The common problem for organizations is accessing the data coming from multiple sources such as databases, spreadsheets, logs, IoT sensors, machine data, and business applications, and then getting it ready for analytics consumption. A lot of time is spent on legacy ETL processes to put data into a data warehouse, database, or data lake, which is not ready for reporting or modeling. The struggle to get the data ready for analytics consumption impacts the entire DataOps team, that is to say, data analysts, data engineers, and data scientists.

DataOps teams are impacted by the bottlenecks of the process of getting data from point A to point B. It can take months to build reports and turn around the data for analytics consumption. But the landscape is changing, and enterprises must turn things around quickly to be proactive in their decision making.

Modern Enterprise Data Preparation Is the Need of the Hour for AI/ML projects

So what exactly is data preparation? Data preparation ensures that data is consistent and of high quality. It involves rationalizing and validating data to make sure it is formatted consistently and that the data will be understood once removed from its source. It can involve changing formats, deduplication, and so on--all with the aim of making data more easily processed and analyzed.

Most of the data science projects start with accessing the data and keeping that data in a format that is purposeful and relevant to the business need. In traditional old school methodology, data scientist will ask the developers, IT and technical people because they are the only ones who have access to data and have the insight and knowhow of the dataset. But this process creates bottleneck and loggerheads between IT and data science teams.

How can Informatica help?

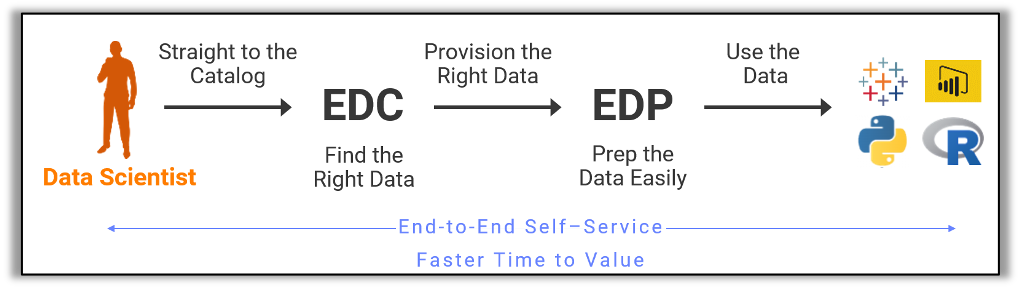

Informatica helps data scientists find the right data using our Enterprise Data Catalog (EDC) and then provision it using Enterprise Data Preparation (EDP). With this solution, data scientists and data engineers can prepare the data for advanced analytics or AI/ML usage. We call it flipping the 80/20 rule to the advantage of a data scientist.

Informatica brings an end-to-end data prep approach compared to standalone data preparation. We can catalog all the data that is in a data lake or anywhere in the enterprise, operationalize the data using Data Engineering Integration (DEI), apply data quality rules, and mask the data for privacy. All this is powered by the Informatica® CLAIRE®engine, the industry’s first metadata-driven AI.

With Informatica Enterprise Data Preparation, data scientists can rapidly discover the data they have in a data lake or anywhere in the enterprise using Google-like semantic search, including certified datasets along with key attributes about the data such as data domains, users, and usage as well as other related data assets. Users can easily visualize data sources, track datasets from source to destination, and enable effective data-driven business transformations with end-to-end data lineage and impact analysis capabilities.

Informatica Enterprise Data Preparation leverages the power of the CLAIRE engine and the use of advanced machine learning algorithms to automate various tasks in the data preparation pipeline, such as data discovery, inferring relationships about datasets, pattern recognition, recommendation of alternative datasets, and recipe recommendations.