Why You Need FinOps-Powered Data Engineering to Control Cloud Costs

Last Published: Mar 18, 2025 |

Table Of Contents

Organizations continue to invest in cloud computing and innovate on public clouds to drive their digital transformation initiatives and stay relevant in a competitive climate.

However, during this necessary journey, many have encountered unexpected — and sometimes exorbitant — cloud costs. And it’s not surprising really: According to Flexera, more than half of organizations spend at least $2.4 million in the cloud each year.1 And for some large companies, the bill can top $100 million per year.2

It’s easy to overspend on cloud services, especially if you have no visibility or predictability of utilization. To survive in today’s digital landscape, you must have transparency into your costs and keep an eye on making sure cost governance is baked into your overall strategy — all the while trying to innovate as well. Not easy, we get it.

That’s why cloud financial operations — or FinOps — has gained such popularity. The premise is simple: To make sure organizations continue to work on breakthrough products and features while ensuring that the business does not exceed budget. Let’s explore this concept.

FinOps Explained

The FinOps Foundation defines FinOps as a discipline that combines financial management principles with cloud operations and engineering to provide organizations with a better understanding of their cloud spend.3 The goal of FinOps is to reduce costs and ensure that every dollar spent in the cloud is for the most important initiatives that will have the maximum ROI while accelerating business outcomes. But this begs the question: What does FinOps have to do with data engineering? Let’s find out.

The Role FinOps Plays in Data Engineering

Data engineering is the process of discovering, designing and building the data infrastructure to help data owners and data users utilize and analyze raw data from multiple sources and formats. Given that data engineering involves data workflows and pipelines, it requires a high volume of data processing. That in turn requires a scale out distributed environment that demands a large amount of compute, storage and other cloud resources. This is where FinOps can help you budget, monitor and govern costs associated to the cloud resources used for data engineering workloads.

But don’t just take our word for it. Below are pain points shared by data architects at large enterprises who run data engineering pipelines at scale. Based on their feedback, it’s clear there is a need for a FinOps-enabled data engineering solution:

- “There is ETL and then there is ELT. There have been instances where ELT has resulted in budget/cost overruns in spite of vendor discounts. How do I know which is the more cost-efficient solution to run my data pipelines?”

- “Data pipelines are just one other cloud workload along with other workloads that use my cloud resources. I need a way to monitor and budget the cloud infrastructure cost for running these data pipelines. How can I accomplish this?”

- “I have data pipelines in place that run daily, weekly, nightly and monthly. There is seasonality with these pipelines, and missing the deadline to complete the jobs will have a major impact on the business from a revenue standpoint. How do I ensure cloud resources used are for high-impact projects at any given time?”

The Informatica FinOps Strategy and Vision

Our FinOps vision is to empower organizations to efficiently manage, govern and monitor costs with transparency throughout the data management lifecycle while delivering business outcomes.

Which brings up a key question: What does Informatica offer to data engineering teams?

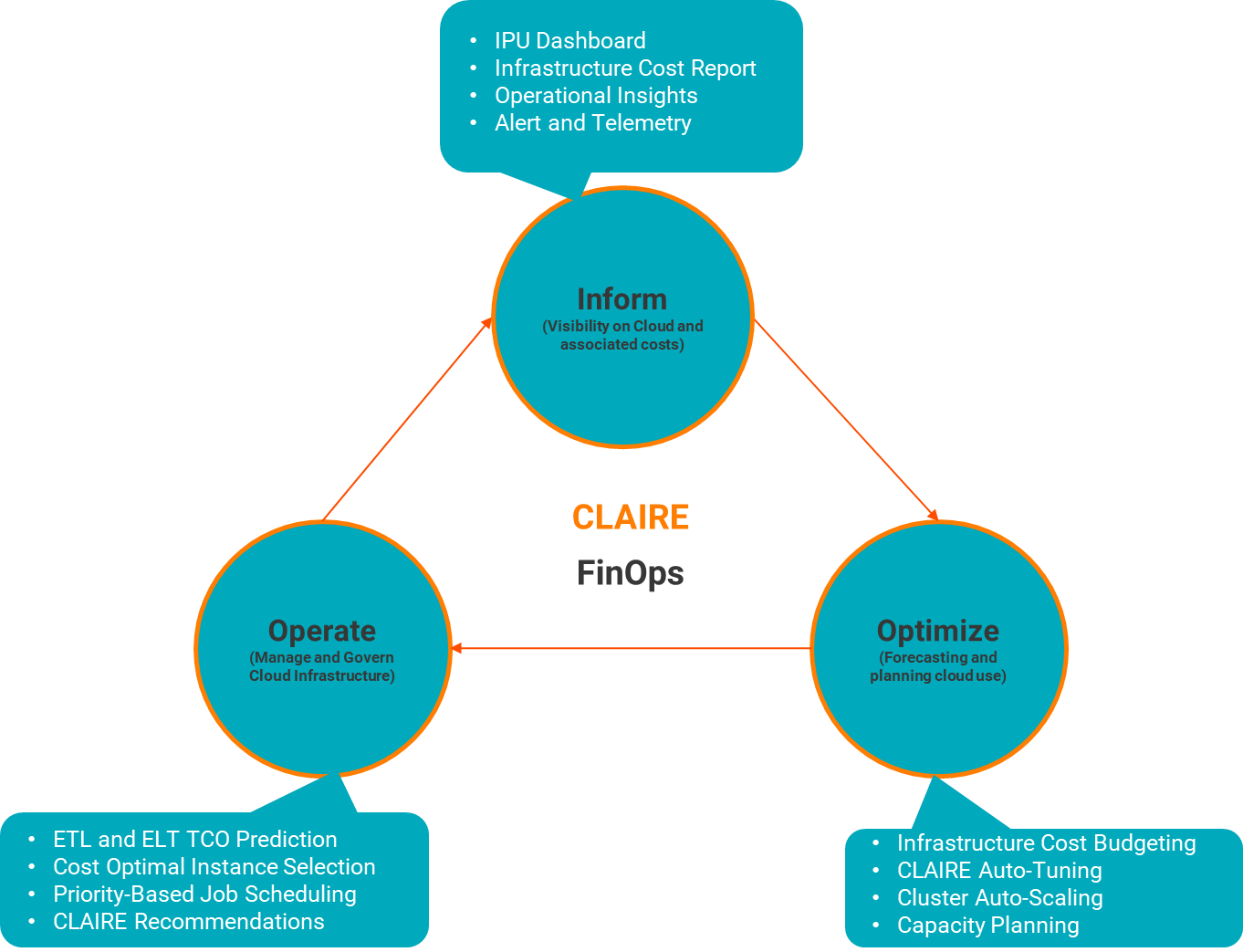

We’re excited to introduce new FinOps capabilities as part of the October release in Informatica Cloud Data Integration, a service of Intelligent Data Management Cloud (IDMC), an AI-powered platform that was purpose built from the ground up for data engineering teams. Figure 1 summarizes the capabilities we will offer. We outlined them based on the three phases of the FinOps lifecycle, established by the FinOps Foundation: inform, optimize and operate.4

- Inform: Provides visibility for creating shared accountability

- Summary of the overall cloud infrastructure cost saved due to intelligent optimizations provided by CLAIRE, our AI engine

- Ability to view and monitor individual cluster cost graph over a period of time

- Optimize: Identifies efficiency opportunities and determines their value

- Ability to set a budget so that the overall cloud infrastructure cost incurred due to advanced clusters does not exceed budget

- Ability for data engineers to fine tune data pipelines for cost and performance using CLAIRE’s active auto-tuning

- Access to the dynamic scale out and scale in of nodes in the cluster based on workload characteristics with advanced cluster auto-scaling

- Operate: Defines and implements processes that achieve the goals of technology, finance and business

- Ability for data engineers to schedule jobs based on priority and expected overall execution time

- Design time insights and recommendations based on CLAIRE’s intelligence to help decide the best engine to schedule jobs for cost and performance needs (i.e., extract, transform, load (ETL) vs. extract, load, transform (ELT)

- Cost and performance-based CLAIRE recommendations during runtime to fine-tune clusters to ensure the highest ROI from cloud infrastructure

Figure 1. FinOps capabilities in Informatica Cloud Data Integration.

Informatica FinOps-Powered Data Integration Solution

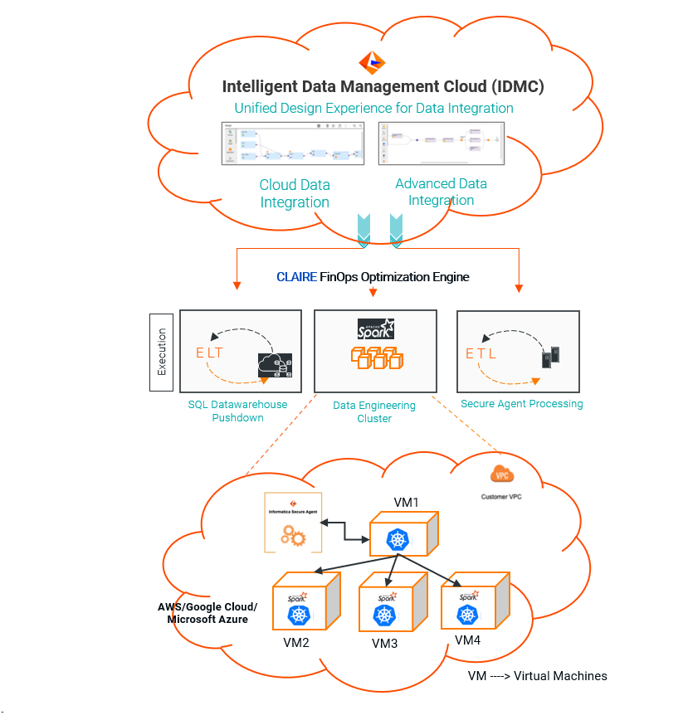

The above-mentioned capabilities are offered in a fully managed advanced cluster deployment option (Figure 2). Using the intelligence of CLAIRE, we abstract the runtime complexities with ETL and ELT based on cost and performance levers.

This means data engineers and developers can use a single, unified, no-code canvas for virtually all their data engineering needs. They can also process and transform structured and unstructured data at virtually any scale without having to explicitly choose between ETL or ELT upfront.

Figure 2. Fully managed advanced cluster deployment.

We believe this new FinOps-powered solution will help you drive innovation, and more importantly, thrive in today’s digital economy.

Next Steps

In a future blog, I will cover details on implementing FinOps features in Informatica Cloud Data Integration. In the meantime, get started on your Informatica journey with these valuable resources.

- Translate raw data into actionable insights. Try these new and advanced capabilities in the Informatica AI-powered data integration service to load, transform and integrate data with a free, 60-day trial.

- Optimize your cloud data management and data integration strategies. Read the ROI Guidebook on Informatica Cloud Data Integration Services by Nucleus Research to find out how.

1https://www.eweek.com/cloud/cloud-computing-2022/.

2https://fortune.com/2022/08/30/cost-cloud-computing-tech-firms-prepared-it-leadership-joe-atkinson/.

3https://www.finops.org/introduction/what-is-finops/.

4https://www.finops.org/framework/#:~:text=FinOps%20Phases,opportunities%20and%20determine%20their%20value.