What Is Data Quality?

Key components, why it’s important and how to better manage data quality

What Is Data Quality?

Data quality refers to the overall utility of a dataset and its ability to be easily processed and analyzed for other uses. It is an integral part of data governance that ensures that your organization’s data is fit for purpose. Data quality dimensions include completeness, conformity, consistency, accuracy and integrity. Managing these helps your data governance, analytics and artificial intelligence (AI) / machine learning (ML) initiatives deliver reliable and trustworthy results.

Data quality rules and continuous monitoring are essential for long-term success.

What Do I Need to Know about Data Quality?

Quality data is useful data. And poor-quality data can have negative implications. These include potential business risks, financial impacts and reputational damage. To be of high quality, data must be consistent and unambiguous. Data quality issues are often the result of database merges or systems / cloud integration processes. In this case, data fields that should be compatible are not due to schema or format inconsistencies. But more broadly, data quality problems can also be caused by human error, system errors and data corruption.

Data quality problems can stem from incompleteness, inaccuracy, inconsistency, or data duplication. This is when there are multiple copies of the same data, resulting in discrepancies. Data with any of these issues can undergo data cleansing to raise its quality. Effective data validation and data governance processes also help ensure data quality. also help ensure data quality.

Why is Effective Data Quality Management Important?

Data quality activities involve data rationalization and data validation. Data quality efforts are often needed while integrating disparate applications. This can occur during merger and acquisition activities. This also applies when siloed data systems within a single organization are combined for the first time in a cloud data warehouse or data lake. Data quality is also critical to the efficiency of horizontal business applications. These include enterprise resource planning (ERP) and customer relationship management (CRM).

What Are the Benefits of Data Quality?

When data is of excellent quality, it can be easily processed and analyzed. This leads to insights that help the organization make better decisions. High-quality data is essential for analytics, AI initiatives and business intelligence efforts. As such, maintaining high data quality standards can help organizations ensure regulatory compliance, improve the customer experience, boost data-driven innovation and enhance decision-making capabilities. This provides benefits to companies across industries, from helping to improve patient care in healthcare to optimizing supply chain operations in retail to enabling personalization of banking offers in financial services.

In addition to helping your organization extract more value from its data, the process of data quality management improves organizational efficiency and productivity. It also reduces the risks and costs associated with poor quality data. Data quality is, in short, the foundation of the trusted data that drives digital transformation. A strategic investment in data quality will pay off repeatedly, in multiple use cases, across the enterprise.

The Foundational Components of Data Quality

The success of data quality management is measured by a few factors. These include how confident you are in the accuracy of your analysis, how well the data supports various initiatives and how quickly those initiatives deliver tangible strategic value.

To achieve all those goals, your data quality tools must be able to:

- Support virtually all use cases. Data migration requires different data quality metrics than next-gen analytics. Avoid a one-size-fits-all approach. Instead, go with one integrated solution that lets you choose the right capabilities for your particular use cases. For example, if you are migrating data, you first need to understand what data you have (profiling) before moving it. For an analytics use case, you want to cleanse, parse, standardize and de-duplicate data.

- Accelerate and scale. Data quality is equally critical for web services, batch, big data and real-time workloads. It needs to be trusted, secure, governed and fit for use. This is regardless of where it resides (on-premises or in the cloud) or its velocity (batch, real-time, sensor/IoT and so on). Look for a solution that scales to fit any workload across all departments. You may want to start by focusing on the quality of data within one application or process. In this case, you would use out-of-the-box business rules and accelerators plus role-based self-service tools to profile, prepare and cleanse your data. Then, when you’re ready to expand the program, you can deploy the same business rules and cleansing processes across all applications and data types at scale.

- Deliver a flexible user experience. Data scientists, data stewards and data consumers all have specific capabilities, skill sets and interests in working with data. Choose a data quality solution that tailors the user experience by role. That way, all your team members can achieve their goals without IT intervention.

- Automate critical tasks. The volume, variety and velocity of today’s enterprise data makes manual data quality management impossible. An AI-powered data management solution can automatically assess data quality and make intelligent recommendations that streamline key tasks. These include data discovery and data quality rule creation across the organization.

How to Avoid Data Quality Problems

To avoid problems, it’s important to understand the key attributes of data quality. Data quality operates in seven core dimensions:

- Accuracy: The data reflects the real-world objects and/or events it is intended to model. Accuracy is often measured by how the values agree with an information source that is known to be correct. This helps ensure accurate customer information for personalized / targeted marketing campaigns, financial data for precise financial reporting, product data for e-commerce platforms and inventory data for efficient supply chain management.

- Completeness: The data makes all required records and values available. This helps create comprehensive customer profiles, ensure complete transactional data to inform analysis and forecasting, contain complete patient medical histories for accurate diagnosis and capture complete feedback data to obtain reliable customer insights.

- Consistency: Data values drawn from multiple locations do not conflict with each other across a record or along all values of a single attribute. Note that consistent data is not necessarily accurate or complete. This helps enforce consistent data formats and structure for effective data integration, ensure consistent product categorization and attributes for product comparisons, maintain consistent data definitions and codes for analysis, and establish consistent data entry standards to prevent duplicates.

- Timeliness: Data is updated as frequently as necessary, including in real time, to ensure that it meets user requirements for accuracy, accessibility and availability. This helps deliver real-time stock market data to inform timely investment decisions, provide up-to-date customer information for immediate response and personalized interactions, offer real-time operational data, ensure timely logistics data for accurate tracking information and update healthcare records in real-time.

- Validity: The data conforms to defined business rules and falls within allowable parameters when those rules are applied. This helps ensure the validity of credit card information for secure online transactions and fraud prevention, validate the accuracy of addresses for shipping, verify scientific research data for study outcomes and ensure the validity of legal documents and contracts.

- Duplication: The data does not have multiple, unnecessary representations of the same data objects within the data set. The inability to maintain a single representation for each entity across your systems poses numerous vulnerabilities and risks. Preventing duplications helps prevent multiple records for the same customer, maintain accuracy in store inventory and ensure accurate patient medical records.

- Uniqueness: No record exists more than once within the data set, even if it exists in multiple locations. Every record can be uniquely identified and accessed within the data set and across applications. This helps verify unique product identifiers to prevent errors in inventory management, provide unique patient identifiers in healthcare systems, enforce unique employee identifiers for accurate HR records and establish unique data keys for data integrity.

All seven of these data quality dimensions are important, but your organization may need to emphasize some more than others. It depends on the specific use cases you’re supporting. For example, the pharmaceuticals industry requires accuracy, while financial services firms must prioritize validity.

Examples of Data Quality Metrics

Some data quality metrics are consistent across organizations and industries. For example, they need to ensure that customer billing and shipping information is accurate, that a website provides all the necessary details about products and services, and that employee records are up to date and correct.

Here are some examples related to different industries:

- Healthcare: Healthcare organizations need complete, correct, unique patient records. This drives proper treatment, accurate billing, risk management and more effective product pricing.

- Public sector: Public sector agencies need complete, consistent, accurate data about constituents, proposed initiatives and current projects to understand how well they’re meeting their goals.

- Financial services: Financial services firms must identify and protect sensitive data, automate reporting processes and monitor and remediate regulatory compliance.

- Manufacturing: Manufacturers need to maintain accurate customer and vendor records. They also need to be notified in a timely way of quality assurance issues and maintenance needs. And they need to track overall supplier spend for opportunities to reduce operational costs.

Data Quality Issues to Look Out For

The potential ramifications of poor data quality range from minor inconvenience to business failure. Data quality issues waste time, reduce productivity and drive up costs. They can also tarnish customer satisfaction, damage brand reputation, force an organization to pay heavy penalties for regulatory noncompliance. They may even threaten the safety of customers or the public.

Here are a few examples of companies that faced the consequences of data quality issues and found a way to address them:

- Poor data quality conceals valuable cross-sell and upsell opportunities. This leaves a company struggling to identify gaps in its offerings that might inspire innovative products and services or allow it to tap into new markets. Nissan Europe’s customer data was unreliable and scattered across various disconnected systems. This made it difficult for the company to generate personalized offers and target them effectively. By improving data quality, the company now has a better understanding of its current and prospective customers. This helped improve customer communications and raise conversion rates while reducing marketing costs.

- Poor data quality wastes time and forces rework when manual processes fail or have to be checked repeatedly for accuracy. CA Technologies faced the prospect of spending months manually correcting and enhancing customer contact data for a major Salesforce migration. By incorporating automated email verification and other data quality measures into the migration and integration process, the company was able to use a smaller migration team than expected. They completed the project in a third of the allotted time with measurably better data.

The Relationship Between Data Quality and Data Governance

Data governance serves as the overarching framework that guides and governs data-related activities within an organization. Data quality is a critical aspect of data governance, as it also focuses on ensuring the dimensions of data quality listed above. While data governance and data quality are interdependent, they are also mutually reinforced. Effective data governance provides the structure necessary to establish high data quality standards.

How data governance supports data quality improvement

Data governance supports data quality improvement by establishing data quality responsibilities across the organization. This ensures that individuals or teams are accountable for data quality. Data governance also provides the necessary authority to enforce data quality standards. Through data governance, organizations can allocate resources, define workflows and implement data quality tools to support data quality improvement initiatives.

How data governance establishes data quality standards and policies

Data governance facilitates collaboration between different stakeholders involved in data management and data quality. This fosters a culture of data quality awareness and improvement. When it comes to establishing data quality standards and policies, data governance defines and establishes them based on industry best practices and regulatory requirements. Data governance also ensures that data quality standards are aligned with business objectives and stakeholders' needs. Data governance also establishes policies and procedures for data quality assessment, measurement, and monitoring, enabling organizations to continuously observe and improve data quality.

The impact of data governance on data integrity and accuracy

Data governance ensures the implementation of data validation rules and mechanisms to maintain data integrity. Through data governance, organizations can establish data quality controls, data quality checks and data profiling techniques to identify and address data inaccuracies. Data governance also enforces data quality improvement processes, such as data cleansing, data enrichment and data remediation, to enhance data accuracy and resolve data quality issues. By implementing data governance practices, organizations can establish data quality frameworks and data quality metrics to measure and monitor data integrity and accuracy.

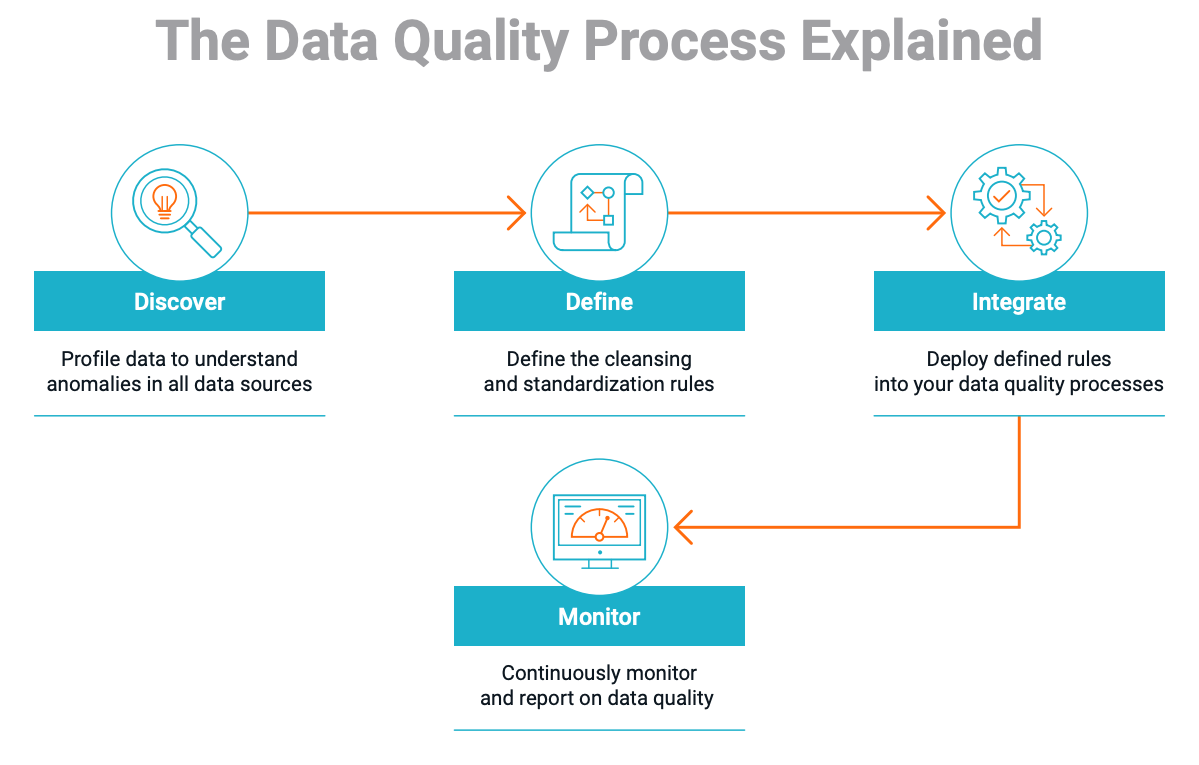

Four Steps to Start Improving Your Data Quality

- Discover

- Define rules

- Apply rules

- Monitor and manage

You can only plan your data quality journey once you understand your starting point. To do that, you’ll need to assess the current state of your data. Determine what you have, where it resides, its sensitivity, data relationships and any quality issues it has.

The information you gather during the discovery phase shapes your decisions about the data quality measures you need and the rules you’ll create to achieve the desired end state. For example, you may need to cleanse and deduplicate data, standardize its format, or discard data from before a certain date. Note that this is a collaborative process between business and IT.

Once you’ve defined rules, you will integrate them into your data pipelines. Don’t get stuck in a silo; your data quality tools need to be integrated across all data sources and targets in order to remediate data quality across the entire organization.

Data quality is not a one-and-done exercise. To maintain it, you need to be able to monitor and report on all data quality processes continuously, on-premises and in the cloud, using dashboards, scorecards and visualizations.

Data Quality Customer Success Stories

Hackensack Meridian Health

Hackensack Meridian Health is the largest health system in New Jersey. It wanted to address rapid growth through mergers and acquisitions (M&A) by reconciling patient data from multiple electronic medical records (EMR) systems. The health system routed master data back to disparate EMR systems and automatically cataloged metadata from 15+ data sources. This gave them the high data quality needed to be better able to discover, understand and validate millions of data assets. It also connected siloed patient encounter data across duplicates and reduced the total number of patient records by 49%, from 6.5 million to 3.2 million.

AIA Singapore

One of Singapore’s leading financial services and insurance firms, AIA Singapore deployed Informatica Data Quality to profile its data, track key performance indicators (KPIs) and perform remediation. Higher-quality data creates a deeper understanding of customer information and other critical business data, which in turn is helping the firm optimize sales, decision-making and operational costs.

Start Unlocking the Value of Your Data

Data is everywhere and data quality is critical to making the most of it. Keep these principles in mind as you work to improve your data quality:

- Make it an enterprise-wide strategic initiative.

- Emphasize the importance of data quality to data governance.

- Integrate data quality into your operations.

- Collaborate with business users to contextualize data and assess its value.

- Extend data quality to new areas (data lakes, AI, IoT) and new data sources.

- Leverage AI / ML to automate repetitive tasks like merging records and pattern matching.

All of these become much easier with the Informatica Intelligent Data Management Cloud (IDMC), which incorporates data quality into a broader infrastructure that touches all enterprise data.

Data Quality Resources

- Blog: Machine Learning Needs Data Quality

- Article: Six Steps to Check and Fix Data Quality

- Free Trial: Informatica Cloud Data Quality